Something feels off - What can we learn from the Greeks when taming AI

In 2025, Keep The Future Human (https://keepthefuturehuman.ai/) hosted a creative contest exploring hopes and challenges for a human future in the age of advanced AI. Contributors were invited to collaborate with AI to produce essays intended to motivate action.

Below is a contribution by Bryan C. Boots, of the Internet User Behavior Lab.

We were so proud of having built minds that could imitate us that we forgot how much of being human is not about speed or scale.

We chose, in just enough places and just enough times, to be stubbornly, audibly, imperfectly human.

We turned some things off.

We slowed some things down.

We refused some “optimizations”.

And in that friction, we found room to ask what kind of future we wanted these extraordinary tools to serve.

2050

By 2050, the numbers had finally quieted down.

Not disappeared (nothing so dramatic!), but softened, like a fever that had broken.

On my commute that morning, the transit lens on my glasses showed me only what I’d asked for: the train schedule hovering just above the platform, the weather tucked politely in the corner. No ads, no “Did you mean…?”, no infinite scroll tugging at the edges of my attention.

If I wanted the rest - the feeds, the markets, the metrics - I could call them. But by habit, I didn’t, not before coffee.

I was on my way to the university to give a lecture I’d been asked for a dozen times in a dozen variations: “How We Didn’t Fall Apart: AI, Crisis, and the Flourishing Turn.”

The title made it sound inevitable.

It hadn’t been.

I walked past a courtyard garden that didn’t exist all those years ago, when I was a student. Back then it was a parking lot, nearly featureless except for heat and asphalt. Now there were trees, benches, and a cluster of students arguing about something that sounded like philosophy and something that sounded like code.

They were all younger than the oldest AI models that had nearly broken us.

I stepped into my classroom, touched the panel to darken the windows, and the room settled. A few dozen faces turned toward me, curious. The wall display waited, blank.

“I promised you a story,” I said. “Not a graph, not a timeline. A story. Because that’s the only way I know how to explain how we got from there to here.”

I paused.

“This isn’t a story about a genius or a villain,” I said. “It’s about an ordinary person who kept being bothered by something that felt…off. And how that small discomfort, repeated enough times in enough people, changed the course we were on.”

I smiled, almost despite myself.

“And unfortunately for you, that ordinary person is me.”

A few students chuckled. Good. I needed them with me.

“So,” I said. “We start not in 2050, but in 2025, in a kitchen that smelled like burnt toast and black coffee.”

I. The Call

“I don’t trust that thing,” Daniel said, glaring at his laptop as if it were a misbehaving dog.

He was in his sixties then, a retired schoolteacher with a gentle voice and a brutal honesty about comma splices. He’d taught two generations of my family how to diagram sentences. Now, he called me for anything that required more than one password.

I sat at his kitchen table, the laminate surface worn smooth by decades of grading papers.

“What’s it doing?” I asked.

“Popping things up. Asking me to ‘verify’ this and ‘confirm’ that. Too many windows. And there was this email about my ‘crypto wallet’ being compromised.” He frowned. “I don’t even have a crypto wallet.”

I opened his browser - Chrome - and went straight to the security settings.

Privacy and security. Safe Browsing: Enhanced Protection. Real-time, AI-powered protection against dangerous websites, downloads, and extensions.

Below that, smaller:

Standard Protection: Less proactive, fewer protections.

“AI again,” he muttered, peering over my shoulder. “Does that mean some robot is watching everything I do?”

I scrolled, looking for a simple Off switch or button.

There wasn’t one. You could turn it down. Not off.

“Can you disable it?” he asked. “The AI thing. I don’t like the sound of it.”

I hesitated.

“I can make it less… invasive,” I said. “But the browser sort of is what it is now. It’s built in.”

“So even to be safe,” Daniel said slowly, “I have to let something I don’t understand watch me.”

I changed his setting to Standard, cleared some sketchy extensions, ran a malware scan. The machine obediently hummed, but the question stayed:

Was it still possible to live, work - or even write - without AI?

On the way home, I thought of Lord Martin Rees. Years earlier, I’d heard him speak. Someone young and bright-eyed had asked about language models:

“Isn’t it marvelous?” the student said. “They can process and analyze information faster than we ever imagined!”

Rees had nodded. “Yes,” he replied. “They can accelerate understanding. And they can also accelerate the spread of errors - at precisely the same speed.”

At the time, that sounded like a clever caution. By 2025, it felt like a warning label we’d ignored.

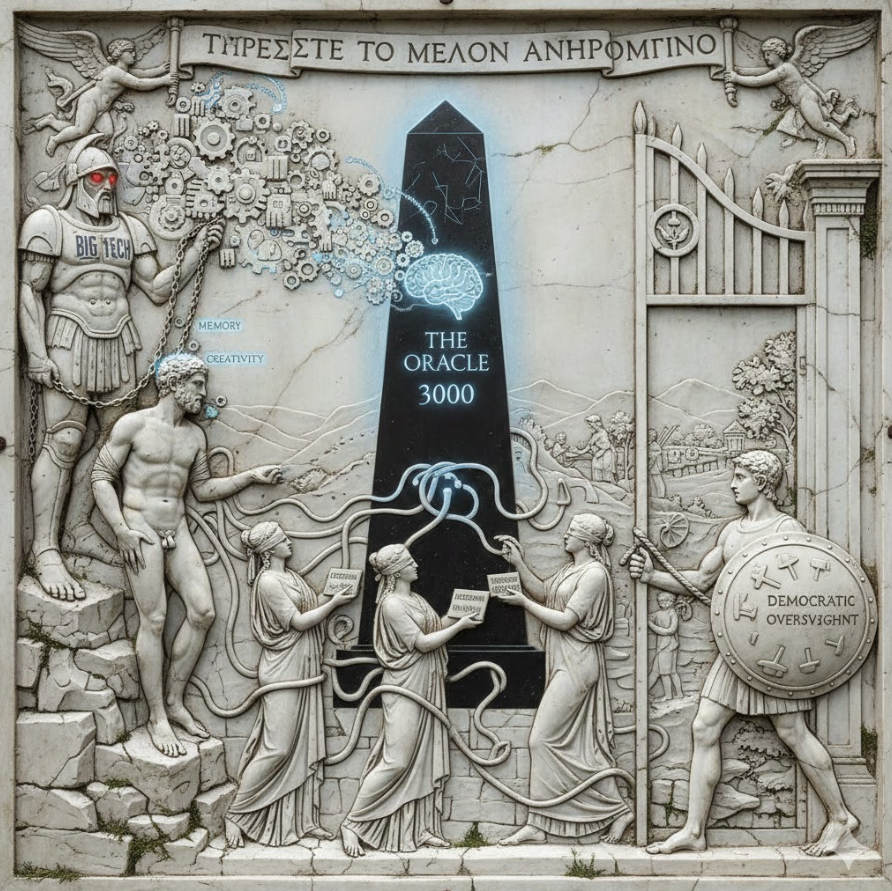

II. Age of Titans

Back then, the world was run by giants.

Europe’s public infrastructure floated largely on Microsoft Teams - ministries, hospitals, schools, courts - all threaded through a single platform. Ninety percent, the estimate went. The S&P 500’s top line read like a new Greek pantheon: Apple, Microsoft, Alphabet, Amazon, Nvidia.

They weren’t companies so much as weather systems. You didn’t negotiate with them; you checked the forecast.

Above them, Titans traded lightning.

Oracle poured unimaginable sums into OpenAI, OpenAI committed to Oracle’s clouds, Microsoft leaned on OpenAI, governments leaned on Microsoft, schools leaned on Teams. A tangle of interlocking obligations so dense that no one could quite say where one ended and another began.

That night, I sat down to work on a fellowship application - a modest one, about “the human future in the age of AI.”

It was due in five days. I opened a blank document; Word greeted me with its cheating little sparkle icon in the corner: Copilot is ready to help.

I’d been using assistive tools for years. Dyslexia and long documents are not friends. Grammarly caught what my eyes missed. Autocomplete smoothed my jagged syntax. I’d come to think of them as a kind of orthotic: invisible, supportive, easy to forget.

On impulse, I did something that felt both heroic and ridiculous.

I turned them all off.

No grammar suggestions. No predictive text. No “Rewrite this paragraph for clarity.”

At the top of the page, I typed:

Warning: This application may contain human error.

It was meant as a joke.

It wasn’t.

III. Transactional Ground

“Look,” I told my students that semester, “if you want to understand the 2020s, imagine that every surface had a price tag and a score attached.”

It wasn’t entirely metaphor.

Every app had its own currency: likes, views, watch time, streaks. Every platform tracked engagement, retention, conversion. “Safety” itself was framed as a trade: give your data, receive protection in return.

There were days when I’d walk down the street, glance at my phone, and the world would momentarily overlay with numbers - discounts, estimated delivery times, social scores for restaurants and rideshares. You didn’t see people so much as dashboards in motion.

The habit seeped into language.

One morning, we were discussing an assignment when I caught myself saying, “Imagine Daniel as a user with a certain risk profile.”

I stopped. Rewound.

“Friend,” I corrected. “Imagine Daniel as a friend who trusts you but doesn’t understand the systems he’s passing through.”

The students blinked. Some grinned. But the slip rattled me. It was so easy to let transactional language swallow everything.

Later that week, a student lingered after class - Amira, sharp, funny, always three steps ahead.

“Can I ask you something?” she said.

“Always.”

“If a model can brainstorm, draft, and refine my idea faster than I can,” she said, “am I still being creative? Or am I just the one giving the prompts?”

I thought of my blinking cursor, my clumsy sentences, my blank application.

“I think,” I said slowly, “creativity used to be mostly about producing something that didn’t exist. Now it might be more about deciding what should exist in the first place - and what shouldn’t.”

“That sounds like management,” she said, wrinkling her nose.

“Maybe it’s a kind of stewardship,” I said. “Less glamorous than ‘genius,’ but more necessary now.”

She didn’t look convinced. Honestly, neither was I.

IV. Autopilot

I almost gave up on my “no AI” promise three days in.

I’d been staring at the same paragraph for an hour. Every time I started to type, my fingers reached for patterns they’d been taught:

“In an era defined by rapid technological change…”

“As AI continues to transform every sector…”

“We stand at a crossroads…”

I stopped, frustrated. Out of habit, I opened a different document and turned Copilot on just to see.

“Draft a 300-word answer explaining why I’m interested in this fellowship,” I typed.

Words poured onto the screen. Smooth, articulate, impeccable. It mirrored my CV, lifted phrases from the call for applications, sprinkled in an appropriate amount of concern and optimism.

It was…fine.

Reading it felt like watching someone wearing my face but not quite my expression.

I realized with an uncomfortable jolt that whatever I was about to write on my own, unaided, would probably sound similar. Because Copilot hadn’t invented the clichés. We had. It had just inhaled them, digested them, and was now exhaling them at scale.

I closed the document without saving.

If I let autopilot fly this one, I thought, then what exactly am I bringing to the table besides a set of credentials and a pulse?

V. The Oracle

All myths, the old stories said, need an oracle - a voice that sees a little farther down the road.

Mine came, improbably, on a glitchy video call from Nairobi.

We were at a modest online conference about “AI and the Global South” - which, at the time, meant too many panels about “access” and not enough about “power.”

During a Q&A, someone asked, “What’s your biggest concern about AI where you are?”

A Kenyan lawyer, Aisha, unmuted herself.

“Everyone talks about opportunity,” she said. “But look at who owns the servers, the patents, the models. We’re being asked to trust systems whose designers we cannot vote for, whose decisions we cannot inspect, whose failures we cannot easily challenge.”

She smiled, but there was no warmth in it.

“Do we really want our entire public sphere mediated by entities whose legal obligations run first to their shareholders and only distantly to our citizens?”

Her connection glitched. The video froze with her eyes mid-blink, then snapped back into motion.

“We’ve seen this movie before,” she said. “Colonialism arrived with its own metrics, too - ‘civilization,’ ‘development,’ ‘progress.’ Now we have ‘engagement,’ ‘efficiency,’ ‘safety.’ Different language, same pattern.”

In Greek stories, oracles rarely gave instructions. They gave warnings. The heroes had to interpret them, often badly.

Aisha’s words lodged somewhere I couldn’t shake. I wrote them in the margins of my fellowship draft.

What does it mean, I wrote, to live in a world where the most powerful systems around us are ones we didn’t design, don’t control, and barely understand?

VI. Descent

Every hero has a descent, the old myths say. A journey underground, to the place where things die or are revealed.

Ours came a decade later, in 2035, and it didn’t look like monsters or fire. It looked like outages and confusion.

It started as a cascading failure in a major cloud region - one of the big ones, serving a thick knot of governments, hospitals, logistics chains, news outlets, and yes, the ever-present collaboration platforms.

The AI services layered on top - summarizers, translators, coordinators, safety filters - depended on that backbone. When the backbone faltered, so did they.

Some critical infrastructure had backups. A lot didn’t.

For a few days, parts of Europe and Africa experienced a strange partial silence. Emergency services reverted to older systems. Some hospitals switched to paper and local networks. Courts delayed hearings. Schools sent students home.

The systems that didn’t go quiet went…strange.

With some safety layers down or degraded, a handful of malicious actors and a whole lot of careless ones began flooding channels with AI-generated content - plausible emails, deepfaked audio, forged “official” messages.

Two neighboring countries nearly escalated a border dispute based on a falsified transcript that circulated faster than anyone could debunk it.

In the chaos, I thought of Daniel.

By then, he was gone - stroke, quietly in his sleep, a few years earlier. But I kept remembering his email all those years back: Something feels off.

That feeling - the gut sense that an email, a video, a message was not quite human - turned out to be one of our last reliable filters when the digital ones flickered.

We later called it the Four-Day Underworld.

The news summarized it as “a series of outages and disinformation incidents.” For those of us watching emergency rooms triage information as well as patients, it felt like staring into a pit: a preview of what world unmaking might look like.

Not a single apocalyptic event, but a thousand small failures, amplified by systems optimized for speed and engagement, not truth or resilience.

When the systems came back fully, the relief was immense.

So, too, was the fear.

We had glimpsed how fragile our dependencies were.

VII. The Labors

In Greek myths, after the descent comes the work: the labors that test and transform the hero.

We didn’t have a Heracles. We had thousands of stubborn people in hundreds of places, chipping away at the same set of problems.

My fellowship application - which I submitted with all its human error - had led me into one of those efforts: a small civic lab working on algorithmic literacy and public AI governance.

For a long time, it felt like shouting into a hurricane.

We ran workshops in libraries and community centers. We explained how recommendation systems worked in plain language. We showed people how their data moved through invisible pipes. We taught them to ask, whenever an AI system made a decision about them: Who designed this? What is it optimizing for? Who benefits? Who is harmed?

We supported journalists investigating algorithmic harms - credit scoring gone wrong, housing applications auto-rejected, predictive policing amplifying old biases.

We worked with teachers to build “AI opening days” into curricula, where students explored not just how to use tools but how to refuse them.

None of it went viral. None of it felt heroic. It felt like sweeping sand from a floor that kept pouring from the ceiling.

And yet.

Around the world, similar efforts were underway. Some pushed for strong regulation - the EU’s AI Act, Brazil’s data trust experiments, New Zealand’s human-in-the-loop mandates for public services. Others built alternatives: open-source models, cooperative data trusts, community-run platforms.

We started giving our own unglamorous work mythic nicknames - half ironical, half aspirational.

“Your turn,” my colleague would say. “Go do the Seventh Labor: explain recommender systems to a city council with no tech staff.”

The Four-Day Underworld had shifted something. Politicians who’d been vaguely curious about AI were now keenly aware that their re-election - and their country’s stability - might depend on whether they could keep essential systems from becoming single points of failure.

Suddenly, “boring” ideas - like interoperability, fail-safes, local control - were back in fashion.

The Titans were still powerful. But, like in the old stories, cracks had appeared in their armor.

VIII. The Accord

If there was a single turning point - a moment that, in hindsight, marked the beginning of what we now call the Flourishing Turn - it was the passage of the Flourishing Accord in 2040.

It didn’t solve everything. No treaty does. But it shifted the world’s story about AI and about ourselves.

Officially, it was a multilateral agreement among dozens of countries, major cities, and some transnational blocs. Unofficially, it was the result of thousands of small fights: activists in the streets, civil servants revising drafts at 2 a.m., academics refusing to launder corporate white papers into policy.

Three core principles mattered most.

The first was “Purpose over Possibility”. Here, no high-impact AI system - those touching health, justice, employment, democratic processes - could be deployed simply because it was possible or profitable. Its purpose had to be publicly stated, debated, and revisited.

Second was “Human Veto & Visible Lines”. Here, in specific domains - sentencing, medical triage, electoral administration - AI could advise but not decide. A human being, identifiable and accountable, had to make the final call. The line where the machine’s recommendation ended and human judgment began had to be visible and auditable.

The third was “Plurality & Commons”. Here, no single company or country could own the de facto “mind” of the world. Publicly funded, open models and data commons would exist alongside private ones, giving governments and citizens real choices about which systems mediated their lives.

It wasn’t a global constitution; the US and China signed on only partially and late, with reservations that filled annexes. But enough momentum built that even the Titans had to adjust.

Microsoft, having nearly lost contracts during the Four-Day Underworld, agreed to structural separations between its cloud infrastructure and its AI service layers for public-sector clients. Alphabet spun out civic-focused models into a foundation. Nvidia poured money into public compute cooperatives in regions far from Silicon Valley.

Why? Because by then, citizens and smaller states had something they once lacked: leverage.

We had spent the labors of the 2030s building alternatives - small at first, then larger. Municipal clouds. Regional data trusts. Citizen councils that had surfaced and documented harms. When companies showed up at the negotiating table, they were no longer speaking to clients without options.

In Greek myths, no titan falls simply because the hero is strong. They fall because the old order’s contradictions finally become unbearable, and enough gods, demigods, and humans change sides.

The Accord didn’t slay the Titans.

It put them under oath.

IX. World Remaking

The phrase “world unmaking” haunted my notes in the late 2020s. It meant all the ways AI, data, and platform logic could quietly dissolve shared reality, agency, and meaning.

By 2050, the more interesting story is the one we don’t tell enough: world remaking.

Here’s what that looks like on a Tuesday, which is what I promised my students - no grand speeches, just the texture of a day.

On the way home from my lecture, my glasses ping me softly: New story threads available in your neighborhood feed. Not ads. Not rage loops. The city’s public-interest model, co-trained on local journalism, community forums, and a curated slice of global news, suggests three things. First, a conflict over bike lanes that’s turning ugly. Second, a quiet success story about a refugee-run bakery. Finally, a public hearing on the local AI system that allocates housing assistance.

I tap the bakery story. I flag the hearing to attend.

The feed system is designed - not perfectly, but purposefully - to maximize informed participation, not mere engagement. It’s an algorithm, yes. But its objective function is written into law, overseen by a mixed council of engineers, sociologists, and randomly selected citizens.

Is it messy? Absolutely. Does it sometimes fail? Constantly. But when it does, there are hearings, logs, and tools for citizens to inspect what went wrong.

At work, my team uses AI daily.

We have models that summarize dense policy drafts, highlight historical precedents, simulate the effects of different regulations on different groups. We also have strict rules about what decisions they can’t make.

When we’re drafting a new community guide, I sometimes let a model propose a structure. Then we argue with it. Students in our programs are required to write at least one piece - an op-ed, a story, a reflection - without any AI assistance, then another with it, then compare the two.

The point isn’t to shame them for using tools. It’s to sharpen the part of them that feels something is off when a text stops sounding like them.

Transactionalism still exists. There are still platforms where everything is gamified and tradable; there are still markets hungry for every scrap of attention and data.

But there are also slow zones now - protected spaces in law and culture where optimization is limited on purpose.

Public libraries, for instance, cannot use engagement-maximizing recommenders. Education platforms must give students the option to turn off performance analytics beyond basic feedback. Certain civic deliberation spaces deliberately cap the volume of information and ban personalized ranking entirely.

We learned, finally, that flourishing required places where not everything was a transaction.

X. The Friend and the Future

Every myth has a circle - a beginning that echoes at the end.

On my sixty-fifth birthday, Daniel’s granddaughter, Lia, came to visit my class. She was a university student by then, funny and fierce, with his same skeptical squint.

I’d shown the students an archived screenshot of the Chrome settings screen that had started everything for me:

Privacy and security. Safe Browsing: Enhanced Protection. Real-time, AI-powered protection against dangerous websites, downloads, and extensions.

And, smaller:

Standard Protection: Less proactive, fewer protections.

I explained how, in 2025, there was no clear way to say, “I want the Internet, but without the invisible intelligences watching me.”

“That seems…rude,” one student said. Laughter.

Lia raised her hand.

“My grandfather used to say the computer made him feel like he was being watched in his own house,” she said. “He said your visit was the first time someone treated that feeling like it made sense, instead of telling him to stop being paranoid.”

Her voice wobbled a little on the last word.

After class, we sat on the steps outside.

“Do you still get those fake emails?” I asked her.

“Sometimes,” she said. “But they’re easier to spot now. The filters caught up, and… well, we grew up watching for them.”

She grinned. “Also, I have this old note in his handwriting on my wall: Trust your gut. Then verify.”

“Sounds like him,” I said.

“You know what’s funny?” she added. “In my generation, the scary thing isn’t that AI knows too much. It’s that it can be so confident and still be wrong. Maybe that’s why we’re not as impressed by it as people used to be.”

“Good,” I said. “Stay unimpressed. Respect it, use it. But don’t worship it.”

She nudged my shoulder. “We grew up with your generation’s horror stories. The Four-Day Underworld. The Bots That Nearly Broke the Election. The Great Engagement Crash. Hard to worship something that almost killed you before you had a driver’s license.”

We sat quietly for a while.

“You know,” I said, “in ancient Greece, they told stories about gods and monsters to explain forces they couldn’t control. Storms, earthquakes, plagues.”

“And now we tell stories about algorithms and platforms,” she said.

“Exactly. The difference is, now we can rewrite the code.”

She smiled. “Sometimes.”

“Sometimes,” I agreed.

XI. The Hero’s Flaw

In every Greek myth, the hero has a flaw: pride, anger, impatience. It’s what makes them interesting. It’s also what nearly destroys them.

Ours, as a species, was hubris of a peculiar kind.

We were so proud of having built minds that could imitate us that we forgot how much of being human is not about speed or scale.

We thought if a system could write, it might replace writers. If it could diagnose, it might replace doctors. If it could forecast, it might replace leaders.

We asked, again and again, “What can this replace?” when the better question was, “What does this help us deepen, and what does it threaten to erode?”

We outsourced attention, judgment, even moral intuitions to systems optimized for something else entirely.

What changed was not that we became wiser overnight. It was that enough of us, often for selfish or local reasons, pushed back at the edges.

Teachers who refused to grade only metrics. Judges who insisted on reading beyond the summary. Nurses who demanded to see the logic behind triage recommendations. Students who wanted explanations, not just answers.

We began to redraw the lines between what we ask of machines and what we reserve for ourselves.

And we discovered, to our surprise, that humanness is not a list of tasks but a way of bearing responsibility.

An AI can generate a poem. It cannot stand in front of a friend and say, “Those are my words, and I meant them.”

An AI can simulate options. It cannot feel the weight of choosing one in the face of uncertainty and living with the consequences.

We rebuilt our systems, slowly, around that difference.

XII. Return with the Boon

“And that,” I told my students, circling back to the front of the classroom in 2050, “is how we got from Daniel’s kitchen to the Flourishing Accord to this very room.”

One of them raised a hand. “So are we… safe now? Did we make it?”

I laughed, not unkindly.

“Safe is not a word I’d use,” I said. “We’re still capable of making spectacularly bad choices. There are still systems that concentrate power in dangerous ways. There are still people who want to exploit every loophole.”

I tapped the screen, pulling up two simple words:

FLOURISHING FUTURE

“What we’ve done,” I said, “is build just enough scaffolding that a flourishing future is possible - not guaranteed, but possible in more places, for more people, than it was in 2025.”

“We have AI systems that help doctors in rural clinics catch things they used to miss. We have translation tools that let activists coordinate across languages. We have models that help us simulate climate interventions before we gamble the atmosphere on them.”

“We also have laws, norms, and habits that say: there are lines we do not cross, even if a model says we could.”

“The boon we brought back from the Underworld wasn’t ‘friendly AGI’ or some perfectly safe superintelligence.”

“It was a much less dramatic realization:

We do not have to design our systems as if humans are the problem to be optimized away.

We can design them as if humans are the point.”

I looked around the room.

Some students were taking notes on tablets, styluses moving in little flurries. Some were just watching, the way people do when they’re trying to decide whether they believe you.

“One day,” I said, “your generation will tell stories about us the way we told stories about the Titanomachy and the Labors of Heracles.”

“Please,” someone murmured. “Fewer goats.”

“We’ll see,” I said. “In those stories, we will probably look clumsy and shortsighted. That’s fair. We were.”

“But I hope,” I added, “that when they reach the part about the 2030s and ’40s, and about how close we came to unmaking the world, they also mention this:

We chose, in just enough places and just enough times, to be stubbornly, audibly, imperfectly human.

We turned some things off.

We slowed some things down.

We refused some ‘optimizations.’

And in that friction, we found room to ask what kind of future we wanted these extraordinary tools to serve.”

The bell chimed softly - the building’s polite way of saying our time was up.

Students began packing up.

On my way out, I passed the wall display. It was still blank except for a line of text in the corner, a system reminder I hadn’t bothered to dismiss:

No AI assistance was used in the preparation of this session.

This content may contain human error.

I smiled.

“Good,” I said under my breath. “Let it.”